Two of the world's most powerful AI companies just admitted their latest models can hack almost anything. The question is whether they can fix the damage before someone else uses the same technology to cause it.

The AI Hacker Arms Race Is Here

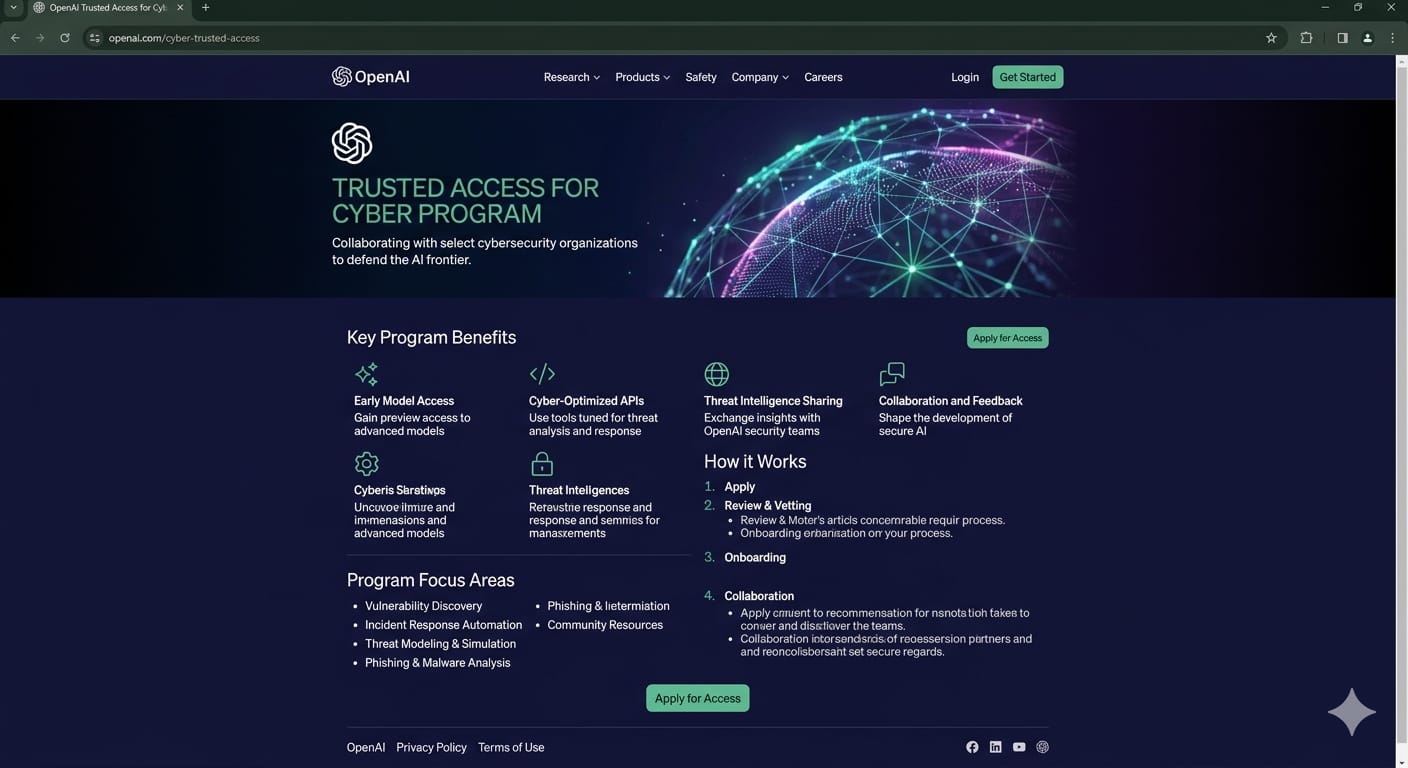

OpenAI on Tuesday launched GPT-5.4-Cyber, a version of its flagship model fine-tuned specifically for defensive cybersecurity. The model is not available to the public. Access is limited to a small group of verified security professionals who pass identity checks through OpenAI's Trusted Access for Cyber program.

The timing is not subtle. Just one week earlier, Anthropic unveiled Claude Mythos Preview, a model the company described as its most capable ever, and refused to release it publicly. The reason: in internal testing, Mythos found thousands of previously unknown vulnerabilities across every major operating system and every major web browser. Not theoretical weaknesses. Working exploits.

We’re expanding Trusted Access for Cyber with additional tiers for authenticated cybersecurity defenders.

— OpenAI (@OpenAI) April 14, 2026

Customers in the highest tiers can request access to GPT-5.4-Cyber, a version of GPT-5.4 fine-tuned for cybersecurity use cases, enabling more advanced defensive workflows.…

Anthropic's response was to launch Project Glasswing, an unprecedented coalition that includes AWS, Apple, Microsoft, Google, NVIDIA, Cisco, CrowdStrike, and JPMorganChase, among others. The mission: use Mythos to find and fix as many flaws as possible in the world's most critical software before attackers get their hands on similar capabilities.

OpenAI is now doing its own version of the same thing. GPT-5.4-Cyber adds binary reverse engineering capabilities, letting security professionals analyze compiled software for malware and vulnerabilities without needing source code. OpenAI also reported that its Codex Security tool has already helped patch more than 3,000 critical vulnerabilities since launch.

Why This Matters for Every Company, Not Just Big Tech

Here is the part that should get your attention if you work anywhere with a computer, which is everywhere.

These are not niche research tools for government agencies. The capabilities demonstrated by both Mythos and GPT-5.4-Cyber represent a fundamental shift in how software vulnerabilities are discovered. Nicholas Carlini, who leads offensive cyber research at Anthropic, said he found more bugs in the last two weeks using Mythos than in the rest of his career combined. One vulnerability in OpenBSD had been sitting undetected for 27 years.

The practical implication is stark. Every piece of software your company relies on, your email system, your ERP platform, your cloud provider, your web browser, has vulnerabilities that AI can now find at machine speed. The window between a vulnerability being discovered and being exploited has collapsed from months to minutes, according to CrowdStrike, one of the Glasswing partners.

https://www.anthropic.com/glasswing

For IT leaders, this means cybersecurity hygiene just became non-negotiable. Patching, access controls, and endpoint monitoring are no longer best practices. They are survival requirements. CrowdStrike's 2026 Global Threat Report found an 89% year-over-year increase in AI-augmented attacks. The attackers are already using these tools. The question is whether your defenders are, too.

For professionals in non-technical roles, the takeaway is simpler but equally urgent. The software you use every day is being stress-tested by AI in ways it never was before. That is a good thing in the long run. More bugs found means more bugs fixed. But in the short term, it means the companies that move slowly on updates and patches are sitting ducks.

Two Strategies, One Problem

The approaches from OpenAI and Anthropic reveal a genuine philosophical split in how the industry is handling this moment.

Anthropic chose to restrict Mythos entirely and channel it through a hand-picked coalition of 12 launch partners plus 40 additional organizations. The company committed $100 million in usage credits and made the model available at $25/$125 per million input/output tokens. The bet: control access tightly, fix the most critical software first, then figure out how to release Mythos-class capabilities safely.

OpenAI took a different path. Rather than forming a closed coalition, it expanded its existing Trusted Access for Cyber program to thousands of verified defenders with a tiered identity verification system. Individuals can verify at chatgpt.com/cyber. Enterprises apply through their OpenAI representative. The bet: democratize access to defensive tools while using identity verification to keep them out of the wrong hands.

Bruce Schneier, the respected security researcher, noted that while Project Glasswing is ultimately a reactive approach, the broader lesson is clear: the current security paradigm is not built for AI-scale vulnerability discovery. The industry needs to move toward systemic resilience, not just hope to stay one patch ahead.

OpenAI and Anthropic are both positioning these programs as preparation for even more capable models coming later this year. OpenAI classified GPT-5.4 as a "high" cyber capability model under its Preparedness Framework. Anthropic has said it does not plan to make Mythos generally available until new safeguards are in place, with plans to test those safeguards on an upcoming Claude Opus model first.

The Bigger Picture

The cybersecurity implications are the headline, but the subtext matters just as much. Both companies are preparing for models that will make the current generation look modest. The fact that they are building entire programs around controlling access tells you something about what is coming next.

For working professionals, the actionable takeaway is this: update your software, enable multi-factor authentication everywhere, and talk to your IT team about whether your organization is plugged into these defensive programs. The AI security era is not coming. It arrived last week, and this week the other shoe dropped.