ChatGPT's image generator used to guess. Now it thinks. OpenAI launched ChatGPT Images 2.0 yesterday, and the new model reasons through your request before drawing a pixel. It renders legible text in five languages, produces up to eight consistent images from a single prompt, and ships free to every ChatGPT and Codex user. The paid tier unlocks a "thinking" mode, but the base model is already light-years ahead of what most professionals used last week.

From Random Generator to Visual Thought Partner

The upgrade is structural, not cosmetic. OpenAI built native reasoning into the model itself. When a user picks a thinking-capable mode in ChatGPT, the system can now search the web for real-time information. It generates multiple distinct images from one prompt. It double-checks its own outputs before handing them over. Sam Altman, in the launch livestream, compared the leap to "going from GPT-3 to GPT-5 all at once."

The API name is gpt-image-2. It operates in two modes. Instant gives fast outputs for quick iterations. Thinking takes longer, reasoning through structure, composition, and consistency before generating. Thinking mode is what makes multi-image storyboards, manga sequences, and character sheets actually work across frames, which previous image models notoriously failed at.

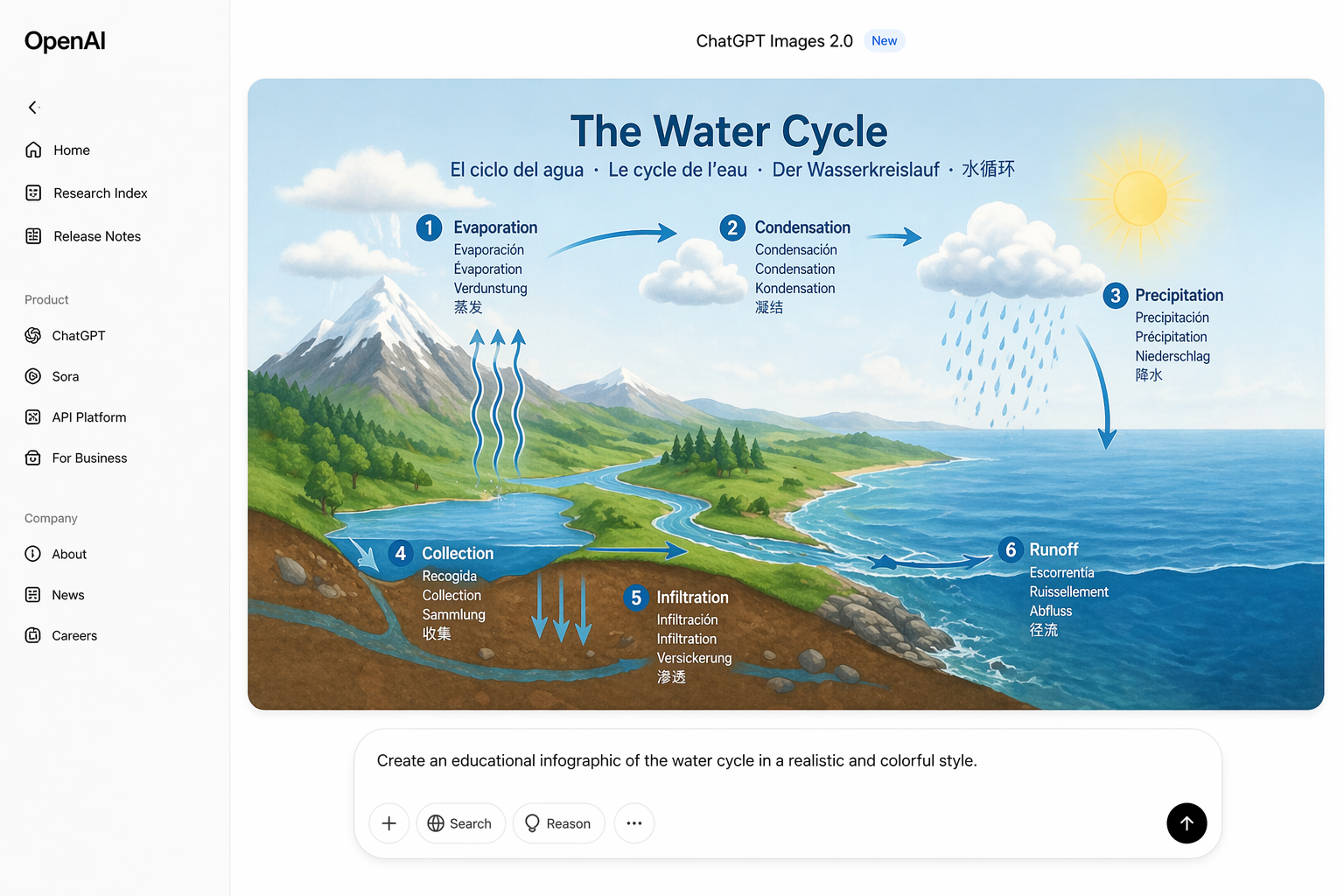

The technical headlines matter for anyone who ships visual work. Maximum resolution is now 2K in the API. Aspect ratios run from 3:1 to 1:3, covering banners, posters, vertical mobile assets, and infographics without the usual cropping dance. The model renders high-fidelity text in Japanese, Korean, Chinese, Hindi, and Bengali, ending the era of AI-generated posters with gibberish scribbles where words should be.

Introducing ChatGPT Images 2.0

— OpenAI (@OpenAI) April 21, 2026

A state-of-the-art image model that can take on complex visual tasks and produce precise, immediately usable visuals, with sharper editing, richer layouts, and thinking-level intelligence.

Video made with ChatGPT Images pic.twitter.com/3aWfXakrcR

Why Your Work Changes This Week

The practical story is about friction removed. A marketing manager used to need three tools for one campaign. One for the hero image, one for copy overlays, and a designer to fix the broken text. ChatGPT Images 2.0 does all three, with readable text, in one prompt. OpenAI is calling the new positioning "a visual thought partner," and the framing actually lands once you see the outputs.

Slide decks are the clearest use case. Anyone who tried to generate an infographic with AI knows the pattern. The image looks great until you zoom in and the text says "Revnue Growth Charrt." Images 2.0 fixes that. It produces maps with legible legends, diagrams with correct labels, and dense UI screenshots that pass a squint test. A single prompt can now generate a full set of consistent visuals for a quarterly review, with matching style and real words.

The eight-image generation feature is the sleeper hit. Prompting eight times and praying for style consistency is over. A brand manager can now ask for "eight variations of our Q2 campaign hero, same character, different settings." The system returns a usable set. Canva creative strategist Dwayne Koh said the model went beyond rendering. It was "interpreting briefs, understanding audiences, and making creative decisions." That is the job description of a mid-level creative, compressed into a prompt.

The Access and Pricing Reality

Every ChatGPT user gets Images 2.0 today. That includes the free tier. Codex users get image generation inside their coding environment without a separate API key, which matters for developers building internal tools. The thinking features sit behind ChatGPT Plus, Pro, and Business subscriptions, with Enterprise rollout coming soon.

Developers access gpt-image-2 through the standard API. Pricing is tokenized at roughly $0.21 per high-quality 1024 by 1024 image in standard mode, per OpenAI's published rates. Thinking mode adds reasoning tokens on top, so layout-heavy prompts cost more. Outputs above 2K remain in API beta and may produce inconsistent results. Production workflows should stick to 2K and below for now.

The old GPT-Image-1.5 is being deprecated as the default but stays available via API for teams that need to keep existing pipelines stable. OpenAI also launched Codex Labs alongside Images 2.0, a training service aimed at helping enterprises deploy its Codex programming assistant across developer teams. The combination signals a bigger pattern. OpenAI is moving from selling models to selling workflows.

The Image Model Race Just Got Tighter

OpenAI did not launch in a vacuum. Google released Nano Banana 2, also known as Gemini 3 Pro Image, in February 2026. That model already offered dense text rendering baked into images, which is exactly the benchmark Images 2.0 is trying to dominate. Anthropic continues pushing Claude's multimodal capabilities. The frontier is now a three-way race, and the clear winner is the professional who learns to prompt well.

The viral moment OpenAI is chasing is photorealism. OpenAI researcher Gabriel Goh, in the launch stream, flagged photorealism as the style he is most excited about. The stakes are commercial. ChatGPT crossed 900 million weekly active users earlier this year, and a viral image-gen moment like the 2025 Ghibli craze would push the platform past one billion. The company needs that growth story as competition from Google and Anthropic intensifies.

Watch for two things in the next 60 days. First, how fast Google responds with a Nano Banana 3 or equivalent refresh. Second, how quickly teams rebuild their content pipelines around reasoning image models. The gap between "AI image generator" and "visual thought partner" just became real. Companies that close it first will move much faster than the ones still uploading stock photos.