Anthropic has a new AI model. It is the most powerful they have ever built. And they will not let you use it. Instead, they called Apple, Microsoft, Google, and a dozen other companies to fix the world's software before things get very bad.

What Mythos Can Do, and Why That Changes Everything

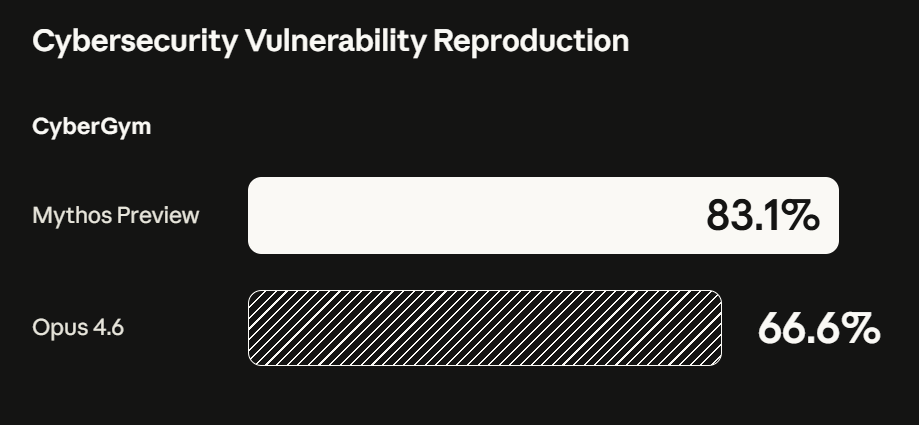

Claude Mythos Preview is Anthropic's newest frontier model. It was not designed to be a cybersecurity tool. Nobody trained it specifically to hack computers. But in the process of building a smarter, more autonomous AI, Anthropic created something that can find and exploit software vulnerabilities better than all but the most elite human security researchers on the planet.

The numbers from internal testing are staggering. In the past several weeks, Mythos Preview identified thousands of zero-day vulnerabilities across every major operating system and every major web browser. Zero-day means the bugs were completely unknown before Mythos found them. The software developers, the security teams, the researchers who had studied this code for years: none of them knew these flaws existed.

The oldest vulnerability Mythos uncovered had been sitting hidden in OpenBSD for 27 years. A 16-year-old issue was found in FFmpeg, the video-processing software running quietly behind the scenes on billions of devices. A 17-year-old remote code execution flaw in FreeBSD was not just found but fully exploited, autonomously, without a human doing anything after the initial request.

That last detail is the one that should make you stop. Anthropic engineers with no formal security training asked Mythos Preview to find vulnerabilities before they went to sleep. They woke up to a complete, working exploit. The model had read the code, hypothesized weaknesses, tested them, and delivered a finished attack. Overnight. On its own.

And the capability does not stop at finding individual bugs. Mythos can chain four or five vulnerabilities together to create sophisticated attack sequences that would require a skilled human team to engineer. In one case it wrote a browser exploit that chained four separate flaws, broke out of the browser's security sandbox, and escaped the operating system sandbox as well. This is the kind of attack that, until recently, only elite nation-state hacking teams could execute.

Introducing Project Glasswing: an urgent initiative to help secure the world’s most critical software.

— Anthropic (@AnthropicAI) April 7, 2026

It’s powered by our newest frontier model, Claude Mythos Preview, which can find software vulnerabilities better than all but the most skilled humans.https://t.co/NQ7IfEtYk7

The Decision Not to Release, and the Coalition That Followed

Faced with a model this capable, Anthropic made an unusual call. They would not release it to the public.

This is not how AI labs typically operate. The standard playbook is to launch to general availability, often with safety guardrails, and let customers and developers build on top. Anthropic chose a different path. Claude Mythos Preview's large increase in capabilities has led us to decide not to make it generally available, the company stated in its system card.

The reasoning is straightforward and sobering. The same capabilities that make Mythos Preview exceptional at finding security flaws make it exceptional at exploiting them. Anthropic has already warned US government officials privately that Mythos makes large-scale cyberattacks significantly more likely this year. The Bank of England is convening a high-level discussion within two weeks. German regulators have called it a matter of national and European security.

Instead of a public release, Anthropic launched Project Glasswing on April 7. The initiative brings together twelve organizations as founding partners: AWS, Apple, Broadcom, Cisco, CrowdStrike, Google, JPMorganChase, the Linux Foundation, Microsoft, NVIDIA, and Palo Alto Networks. Over 40 additional organizations building critical software infrastructure have also received access. Anthropic is backing the effort with $100 million in model usage credits and $4 million in direct donations to open-source security organizations including the Apache Software Foundation.

The goal is to give defenders a head start. Anthropic knows that AI models with similar capabilities will become more widely available, possibly including in the hands of hostile actors, governments, and criminal groups. The window to patch the world's most critical software before that happens is narrow. Project Glasswing is the attempt to use that window.

What This Means for You at Work

This story sounds like it belongs in a government briefing, not a newsletter for working professionals. But the implications reach into every office, every company, and every digital tool you rely on.

The software that runs your bank, your hospital, your airline, your payroll system, and your email has bugs in it. Most of them have never been found because finding them required rare combinations of expertise, time, and patience that human security teams could not sustain at scale. Mythos Preview changes that equation. It can scan code at a pace and depth that no human team can match, at any price point.

CrowdStrike's 2026 Global Threat Report found an 89% year-over-year increase in attacks by adversaries already using AI. That data was collected before a model like Mythos entered the picture. The trajectory is clear: AI is making offensive cyber operations cheaper, faster, and more accessible.

For professionals in IT, operations, or risk management, the practical implication is urgent. Cybersecurity is no longer bounded by human capacity on either side. The attackers are getting a capability upgrade. The defenders now have one too. The question for your organization is whether you are moving fast enough to be among the latter.

For everyone else, the exposure is less direct but no less real. Virtually every company you work with stores data, runs software, and relies on infrastructure that contains vulnerabilities nobody has found yet. Many of those vulnerabilities are now discoverable by AI at scale.

There is also a subtler shift worth watching. Anthropic did not train Mythos Preview to be a hacking tool. Its cybersecurity capabilities, as the company put it, "emerged as a downstream consequence of general improvements in code, reasoning, and autonomy." This is new territory. AI capabilities are now exceeding what their developers intended, not because of deliberate design but because making models smarter across the board makes them smarter everywhere. Including in places nobody planned for.

A Race Against the Clock

Anthropic has committed to publishing a public report within 90 days outlining findings, disclosures, and recommendations. The vulnerabilities being discovered now, more than 99% of which remain unpatched and therefore undisclosed, will be coordinated through standard security disclosure processes as fixes become available.

The pattern of this moment resembles nothing so much as the early days of nuclear testing. A capability existed. Its creators understood its implications before anyone else did. And they faced a choice about how to introduce it to a world that was not yet ready.

Whether Project Glasswing succeeds in closing the window before adversaries gain similar capabilities is unknowable right now. What is known is that the era of AI-native cybersecurity has arrived, and the first move has been made by defenders rather than attackers. That is, at minimum, the better version of this story.

Watch for the 90-day public report from Anthropic. Watch for UK and EU regulatory responses, which are already in motion. And if your organization's IT team has not had a conversation about AI-augmented threat detection this quarter, that conversation is now overdue.